Roger Chen

In this project, we used compute homographies using a set of labeled feature points in a pair of images. Then, we use multiresolution blending to create a composite of many images, which results in a panorama image.

I used my DSLR and my cell phone camera to capture input images. I was able to set manual settings on both cameras, so that the exposure of the images would not change between shots.

I also created a point labeling web interface, which was used to label all of my image key points in these examples. The labeler supports adding, deleting, moving, loading, and saving arrays of points.

The first thing I did was to try some basic warping operations in order to test my computeH and warpImage functions. I took a picture of Wheeler Hall and warped it so that the face of the building was rectangular. This made the edge of the image very distorted, but I did get a good view of Wheeler Hall in the corner.

I tried the image rectification process on a different photo. This photo contains some buildings in Seattle, which were photographed from a high angle. I warped the image so that the large building in the center looks like it is rectangular.

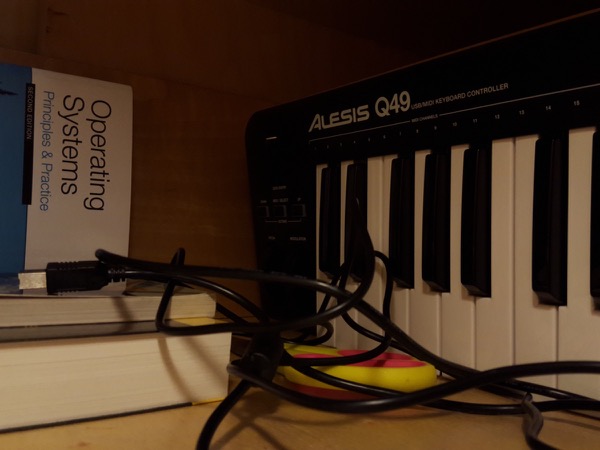

When I first wrote the program for blending multiple images together, I tested my code using a few pictures that I had taken of my desk, since it was the closest and most convenient location. However, the shallow depth of field of these photos made annotation difficult. There are not many sharp corners in these images to choose from.

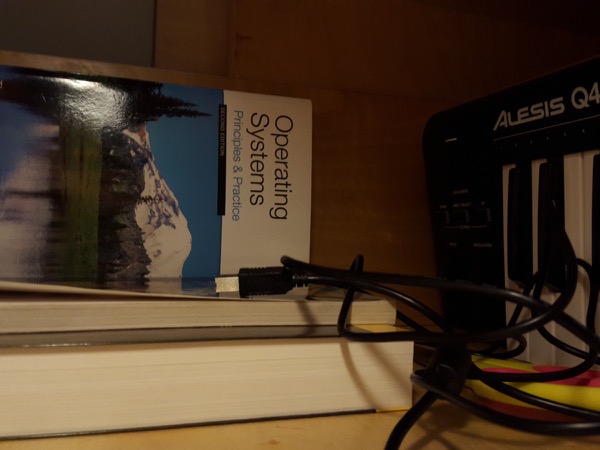

This set of images was easier to annotate, because there are a lot of fine details and textures throughout the scene.

I took some more pictures of some of my belongings, including the cable organizer of my desk, my old broken hard drive, and my water bottle. The blurry background and flat wood texture made it hard to find points to annotate, but I was able to get a good mosaic with some persistence.

Here is another mosaic of the hard drive, but this time it is on the ground.

I wrote a function to project points onto a cylinder (cylindrical projection), and another function to do the inverse cylindrical projection.

The goal of this section was to enable support for very wide panoramas. When you have only 3 images, you can compute homographies between adjacent pairs directly. When you have 4 or more images, you need to multiply matrices together to get a homography matrix between 2 non-adjacent images. So, the images toward the edge of the panorama end up getting very stretched and very large (my computer runs out of memory).

One way to fix this is to use cylindrical projection. Instead of acting as a wide angle camera with a flat image plane, the cylindrical projection panorama pretends that the input images can be warped onto the inner surface of a cylinder, and then you can get the resulting image by peeling off the surface of the cylinder, like wrapping paper or tin foil. So, I used my inverse cylindrical projection function in order to do the image interpolation, and I used the regular cylindrical projection function to translate the annotated keypoints. Then, I used my regular projection warping code to morph the images together (I should have been able to use a translation instead of a projective transformation, but I didn't hold the camera exactly still when I took these photos).

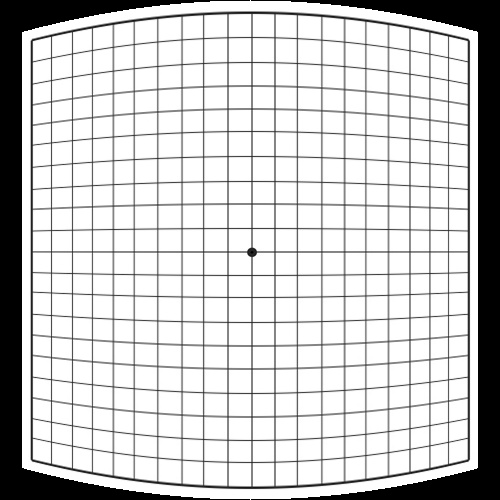

This was just a test image to get a sense of what the cylindrical projection was doing.

I originally took a bunch of pictures in a 360° rotation, because I wanted to do the 360° interactive image, but I realized that I didn't have the patience to label so many images. Maybe I'll try that part in Part B after we have automatic annotation. But for now, I just took 5 of these images (from 1 corner) and used them in a panorama with cylindrical projection.

Most of the time I spent on this project was spent labeling points and trying to figure out what kinds of photos would make a good panorama. The coding was pretty straightforward. So, I can't wait until we have automatic annotation of points.